Picture this: You’re explaining your vision to a cinematographer, but they’re standing behind a thick glass wall. You gesture wildly, describing the sweeping crane shot you imagine, the subtle push-in that builds tension, the orbital reveal that showcases your product from every angle. They nod enthusiastically—then deliver something completely different.

This is the daily reality of AI video generation for most creators. You pour creativity into your prompts, carefully choosing words like “gracefully,” “dynamically,” or “cinematically,” hoping the algorithm will translate your vision into movement. Sometimes it works. Often it doesn’t. And you’re never quite sure why.

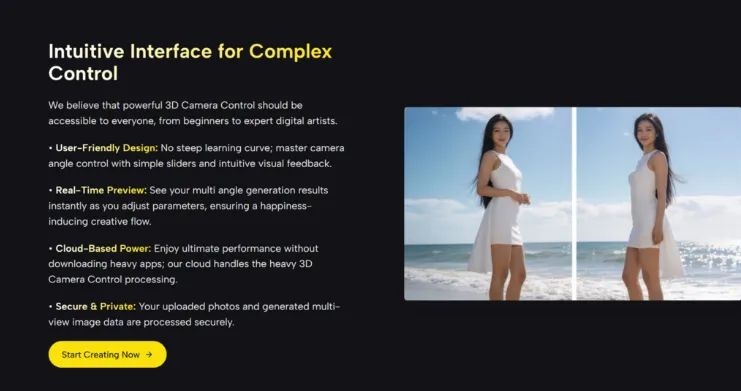

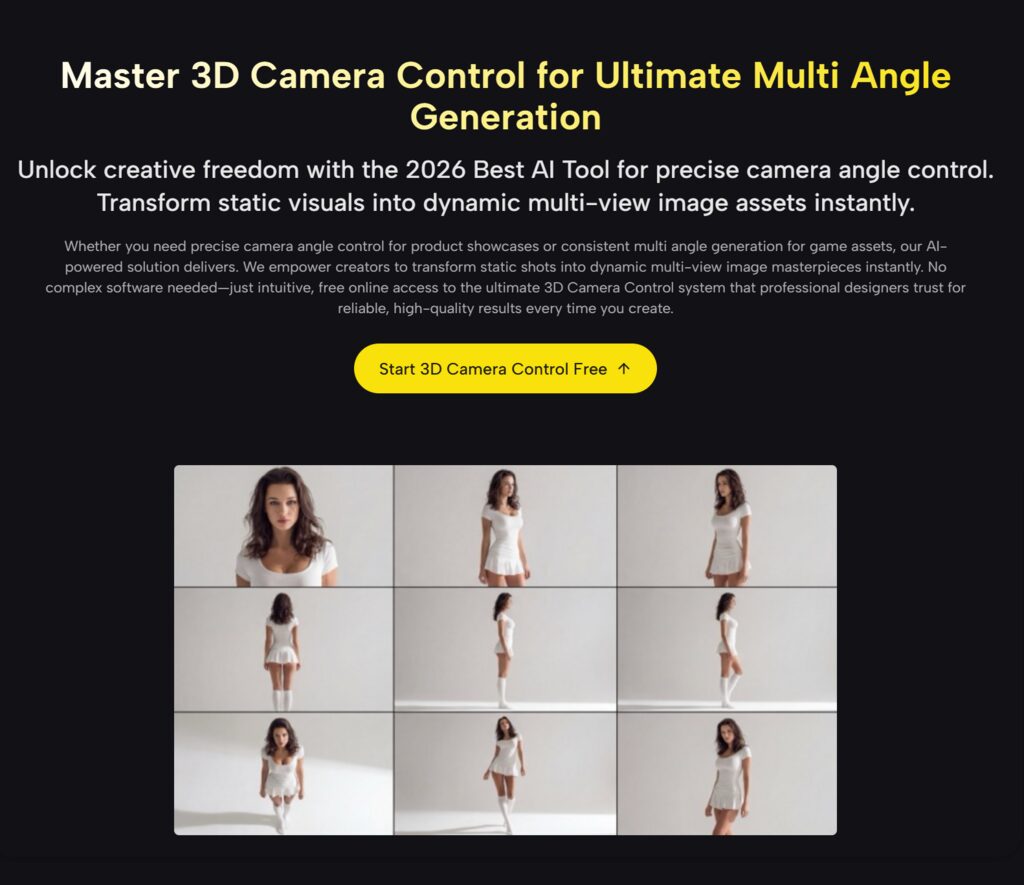

The fundamental problem isn’t your prompting skills or the AI’s capabilities—it’s the medium of communication itself. Language is beautifully expressive for emotions and concepts, but remarkably imprecise for spatial geometry. That’s where 3D camera control transforms the conversation from interpretation to specification, giving you the visual language cinematographers have used for decades.

Contents

- 1 The Hidden Language of Camera Movement

- 2 What 3D Camera Control Actually Gives You

- 3 Deconstructing Professional Camera Work

- 4 My Evolution: Three Phases of Understanding

- 5 Real Applications That Changed My Work

- 6 The Honest Challenges You’ll Face

- 7 Your Starting Point: Practical Exercises

- 8 The Broader Transformation

The Hidden Language of Camera Movement

Why Words Fail Where Numbers Succeed

I spent my first month with AI video tools convinced I just needed better prompts. I studied cinematography terminology, learned the difference between “dolly” and “truck,” memorized when to say “tilt” versus “pan.” My prompts became impressively technical: “Execute a 45-degree counter-clockwise orbital movement while maintaining subject center-frame, combined with a gradual 1.5x optical zoom.”

The AI’s response? Inconsistent at best. Sometimes close, often wildly off, occasionally spectacular—but never reliably repeatable.

The Geometry Problem

Here’s what I eventually realized: camera movement is fundamentally mathematical. A professional cinematographer doesn’t think “move gracefully around the subject”—they think “12-foot radius arc, 60 degrees of travel, 8 seconds duration, starting from camera-left at eye level.”

Those numbers create a precise spatial relationship that’s impossible to capture in descriptive language. “Slowly” to one person might mean 5 seconds; to another, 20 seconds. “Close-up” could be 2 feet away or 2 inches, depending on context.

What 3D Camera Control Actually Gives You

Let me break down the practical differences I’ve experienced:

| Creative Element | Prompt-Based Approach | 3D Camera Control Approach |

| Repeatability | Regenerate hoping for similar results | Save exact parameters, reproduce perfectly |

| Experimentation | Rewrite prompt, wait for generation | Adjust slider, see preview immediately |

| Complexity | Struggle to describe multi-axis movement | Layer movements visually, test combinations |

| Communication | Explain vision in paragraphs | Show collaborators exact camera path |

| Troubleshooting | Unclear what changed between attempts | Isolate specific parameters causing issues |

| Skill Development | Learn prompt syntax and keywords | Learn actual cinematography principles |

That last point surprised me most. Using 3D camera control systems actually made me a better visual storyteller because I was learning real filmmaking principles, not just AI-specific tricks.

Deconstructing Professional Camera Work

The Three Dimensional Space

When you work with 3D camera control, you’re manipulating your camera within a virtual space defined by three types of movement:

Circular Paths: Orbital Movement

Imagine your subject sitting at the center of a sphere. Your camera can travel along the surface of that sphere—circling horizontally (like walking around someone), vertically (like an elevator moving up and down while facing them), or any diagonal combination.

In my testing, I’ve found that horizontal orbits between 30-90 degrees create the most professional-looking results. Less than 30 degrees barely registers as movement; more than 90 degrees can introduce perspective distortions that AI struggles to maintain consistently. Full 360-degree orbits? They look impressive in concept but often create spatial confusion in the final output.

Linear Paths: Tracking and Dollying

This is your camera moving through space while maintaining a fixed orientation. Moving sideways (tracking) reveals depth and layers in your scene. Moving forward (dolly-in) creates intimacy and focus. Moving backward (dolly-out) establishes context and scale.

What I’ve learned through experimentation: combine linear movement with slight rotation for more dynamic results. A pure lateral track can feel mechanical, but add a 5-10 degree rotation toward your subject, and suddenly it feels intentional and alive.

Optical Changes: Zoom and Focus

Technically not camera movement, but zoom changes alter your field of view without changing position. The classic “dolly zoom” (moving backward while zooming in, or vice versa) creates that disorienting Hitchcock effect where the subject stays the same size but the background warps.

Here’s a practical tip I wish I’d known earlier: AI handles gradual zooms much better than rapid ones. A slow 1.2x zoom over 5 seconds maintains spatial coherence; a quick 3x zoom in 2 seconds often introduces artifacts or perspective inconsistencies.

My Evolution: Three Phases of Understanding

Phase One: The Prompt Perfectionist

I believed better writing would solve everything. I crafted elaborate descriptions, used precise terminology, even tried writing prompts in different languages hoping for different interpretations. Results were scattershot—occasional brilliance surrounded by mediocrity.

Phase Two: The Parameter Discoverer

When I first encountered AI Video Generator Agent interfaces, I felt overwhelmed. So many sliders, angles, and numerical inputs. But I started simple—just the orbital controls. Suddenly I could specify “45 degrees clockwise, 10-foot radius” and get exactly that. The consistency was revelatory.

Phase Three: The Cinematic Designer

Now I approach projects like a director with a shot list. I pre-visualize camera movements, test them in 3D space, refine based on what actually looks good (not what sounds good), then generate. My success rate jumped from maybe 20% to around 70-75%. More importantly, my “failures” are now minor adjustments rather than complete misses.

Real Applications That Changed My Work

Client Presentations That Actually Match Concepts

I do freelance work for a B2B marketing agency in singapore. Before 3D camera control, client presentations were awkward: “Here are five different AI generations, and hopefully one matches what we discussed.” Now I can show them the exact camera movement in preview, get approval, then generate with confidence.

Building a Visual Signature

One unexpected benefit: I’ve developed a recognizable style. I favor subtle orbital movements combined with slow push-ins—it’s become my signature. Clients now request “that smooth rotation thing you do.” That’s only possible because I can reliably reproduce specific movements.

Teaching and Collaboration

When working with other creators, I can share camera parameter files instead of trying to describe movements in text. It’s like sharing a recipe instead of describing how food tastes. The precision eliminates ambiguity.

The Honest Challenges You’ll Face

The Learning Curve Is Real

Don’t expect instant mastery. My first week with 3D camera control produced technically accurate but aesthetically boring shots. I had precision without taste. Understanding why certain movements work requires studying actual cinematography, not just mastering the interface.

AI Still Has Opinions

Even with perfect camera parameters, the AI sometimes prioritizes other factors. I’ve had generations where the camera path was perfect but the subject morphed unexpectedly, or the lighting shifted mid-movement. You’re collaborating with the AI, not commanding it. Typically takes me 2-4 generations to get everything aligned.

Complexity Has Limits

I’ve learned that simpler movements often work better. That elaborate seven-axis camera choreography you imagined? The AI might struggle to maintain coherence. Start with two-axis movements (orbit + zoom, or pan + dolly), master those, then gradually add complexity.

Your Starting Point: Practical Exercises

Exercise One: The Single Product Orbit

Place a simple object at center frame. Create a 60-degree horizontal orbit, 8-second duration, constant distance. Generate it. This teaches you the baseline of what consistent orbital movement looks like.

Exercise Two: The Emotional Zoom

Keep the camera static. Experiment with zoom speeds. Try a 3-second zoom versus a 10-second zoom on the same subject. Feel the difference in emotional impact. Fast zooms create urgency; slow zooms build anticipation.

Exercise Three: The Compound Movement

Combine a 45-degree orbit with a 1.3x zoom. Notice how the dual movement creates dimensionality that neither achieves alone. This is where 3D camera control shows its power—layering movements that would be nearly impossible to describe in text.

The Broader Transformation

What excites me most isn’t just the technical improvement in my videos—it’s the shift in creative thinking. I now conceptualize projects spatially rather than verbally. I sketch camera paths before writing prompts. I think in arcs and trajectories rather than adjectives and adverbs.

This is the real promise of 3D camera control: it doesn’t just improve your AI outputs, it teaches you to think like a cinematographer. And that skill transfers everywhere—to traditional video, to photography, to visual storytelling in any medium.

The gap between “AI-generated content” and “professional work created with AI” is closing rapidly. The creators bridging that gap aren’t those with the best prompts—they’re those who understand that precision and creativity aren’t opposites. They’re partners.

Your camera is waiting. The question isn’t whether you can control it—it’s what story you’ll tell once you do.

Zack Hart

Hey there! I’m Zack Hart, the pun-dedicated brain behind PunsClick.

Based in Alaska, I built this site for everyone who believes a well-placed pun can brighten a dull day.

Whether you’re into clever wordplay or cringe-worthy dad jokes, you’ll find your fix here. We’re all about bringing the world closer — one pun at a time.