A lot of AI video discussion still sounds like a contest of spectacle. Which model looks most realistic, which one goes most viral, which one produces the most cinematic demo. But that lens misses something important. For people who actually make content, the harder question is not whether a model can impress once. It is whether a model can be guided. That is why Seedance 2.0 deserves a closer look. In the way it is described and presented, it seems less obsessed with surprise for its own sake and more focused on turning creative intent into something closer to directed output.

That emphasis became especially noticeable after Seedance 2.0 was introduced in February 2026. It arrived during a period when AI video tools were becoming more capable, but also more confusing. Users had access to more models, more demos, and more promises, yet many still struggled to get exactly the scene they wanted. Seedance 2.0 stood out because its identity was built around motion stability, multimodal input, audio-video joint generation, and more granular creative control. Those are not random feature claims. Together, they describe a model that is trying to close the gap between generation and direction.

On SeeVideo, that identity becomes even clearer. The platform places Seedance 2.0 at the center of its video workflow and distinguishes it from other major models by focusing on multi-scene generation, audio input support, and flexible reference use. To me, that makes Seedance 2.0 less interesting as a buzzword and more interesting as a creative tool category: a model designed not only to produce clips, but to interpret creative signals in a more deliberate way.

Contents

Why Seedance 2.0 Drew Attention So Quickly

The model gained attention partly because AI video had already reached a threshold where good-looking output was no longer enough. Users wanted systems that felt less chaotic. They wanted fewer random artifacts, stronger continuity, and better alignment with what they were actually trying to communicate.

Seedance 2.0 AI Video appeared to answer that demand with a different emphasis. Instead of framing success only through visual realism, it pointed toward controllability. That change matters because the most frustrating part of AI video is often not low quality. It is low predictability.

The Problem Was Never Only Visual Quality

Plenty of AI clips can look impressive for a moment. The real challenge is whether the model behaves in a way that allows creative planning. If movement, lighting, framing, and progression all feel disconnected from the user’s intent, even a beautiful result becomes hard to use.

Control Became The More Serious Benchmark

That is what makes Seedance 2.0 distinct in my view. It pushes the conversation toward whether the system can follow direction across multiple dimensions, not just whether it can produce an attractive single shot.

What Makes Seedance 2.0 Different

The model’s uniqueness comes from how broad its control surface appears to be. It is officially described as supporting text, image, audio, and video inputs under a unified multimodal audio-video joint generation architecture. That may sound like product language, but the practical meaning is significant: users are not limited to expressing intent through one channel.

It Expands Prompting Into A Richer Creative Process

Most people still think of AI video prompting as writing a sentence and hoping it translates well. Seedance 2.0 seems built around a wider assumption. Sometimes text is not enough. Sometimes the best instruction is a reference image, a piece of audio, or an existing video signal that communicates timing, style, or motion.

This matters because creative direction rarely exists in one form. A designer may think visually. A filmmaker may think in shots. A marketer may think in brand references. A musician may think in rhythm. A model that can interpret multiple forms of input is operating on a more realistic view of creation.

It Brings Direction Closer To Production Language

The official references to performance, lighting, shadow, and camera movement are especially telling.

Lighting And Shadow Suggest Stylistic Intent

When a model is described in terms of lighting and shadow control, it signals that scene mood is being treated as a serious part of the output rather than a decorative afterthought.

Camera Movement Signals Narrative Awareness

Camera behavior matters because it influences how a viewer reads a scene. A static image turned into motion is one thing. A sequence with intentional camera language is something else entirely.

It Places Strong Weight On Motion Stability

Motion stability sounds less glamorous than cinematic quality, but it may be more valuable. In practical use, unstable motion is often what breaks immersion first. A system that improves stability is not just improving aesthetics. It is improving usability.

How Seedance 2.0 Works Inside SeeVideo

SeeVideo gives Seedance 2.0 a clear role rather than presenting it as one interchangeable option among many. The platform positions it as the core video model for faster multi-scene generation and audio-guided creation.

Step One Selects Seedance 2.0 As The Engine

Users start by choosing the model. Seedance 2.0 is the natural choice on the platform when the project needs more than a single visual moment. It is also the main option when the user wants broader reference flexibility.

Step Two Starts From Text Or Image Inputs

SeeVideo keeps the workflow straightforward by letting users begin from text or image inputs. The broader official model descriptions also highlight support for audio and video references, which helps explain why Seedance 2.0 is described as unusually flexible.

Step Three Generates Scenes And Tests Direction

Once the input is in place, users generate the output and refine it if necessary. This is where Seedance 2.0’s core promise matters most. A controllable model should make revision more productive rather than making every rerun feel unrelated to the last.

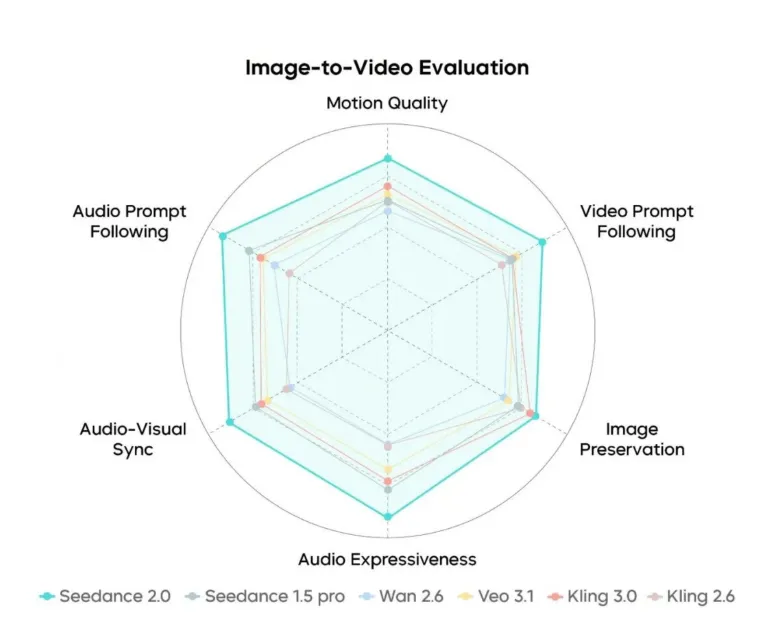

Step Four Places The Result In A Larger Model Ecosystem

On SeeVideo, Seedance 2.0 can be compared with other tools such as Veo 3 and Sora 2. That comparison layer is useful because it reveals what makes Seedance 2.0 special. It is not trying to be every model at once. It is strongest where structure, control, and multimodal direction matter.

How Seedance 2.0 Compares In Creative Terms

The easiest way to understand the model’s role is to compare its style of value with what other leading models are often known for.

| Model Focus | Primary Strength | Where Seedance 2.0 Feels Distinct |

| Photorealistic systems | Visual realism and believable footage | Adds broader reference control and stronger multi-scene emphasis |

| Cinematic storytelling systems | Mood and narrative texture | Leans more into controllable generation signals |

| Lower-cost fast generators | Speed and volume | Aims for more structured and directed output |

| Simple text-to-video tools | Easy entry for beginners | Expands beyond text into a richer creative workflow |

Its Edge Is Not Only Quality

It would be too simple to say Seedance 2.0 wins because it looks better. Its more interesting edge is that it seems designed to listen better. That is a subtle difference, but it is often the dividing line between a demo model and a production tool.

Its Real Strength Is Interpretive Flexibility

A user can bring different kinds of source material into the process. That is powerful because it makes the model more adaptable to real-world workflows where ideas arrive in fragments rather than in perfect prompt form.

Where Seedance 2.0 Is Most Useful

The model seems especially well suited for projects where intent is layered and where the output needs more structure than a simple motion clip.

Brand Storytelling Needs More Than Surface Beauty

Brand videos often need consistent tone, controlled movement, and a clearer sequence of ideas. Seedance 2.0 looks well matched to that kind of work because its official positioning emphasizes direction and scene control.

Concept Development Benefits From Stronger References

Creative teams often begin with reference boards, test images, timing ideas, and rough examples. A model that can translate more of that input into the video process becomes easier to integrate into actual project development.

Short Sequences Gain From Multi-Scene Logic

Even when the final work is brief, multiple scenes often create a stronger sense of progression. That is where Seedance 2.0’s multi-scene orientation seems more meaningful than many one-shot generators.

What Users Should Still Keep In Mind

A balanced understanding is important. Seedance 2.0 may offer more control, but it does not remove the need for judgment.

More Control Also Means More Responsibility

When a system gives users more ways to guide output, the quality of those references and prompts matters even more. Strong tools do not remove decision-making. They make it more visible.

Iteration Is Still Normal

Even a more controllable model will usually require multiple passes. That is not a flaw so much as a realistic part of serious AI video use.

Comparisons Still Matter

Seedance 2.0 is distinctive, but not universal. Some ideas may still work better in other models depending on whether the priority is native audio, a specific cinematic feel, or another visual quality.

Why Seedance 2.0 Feels Like A Meaningful Step

What makes Seedance 2.0 noteworthy is not just that it launched in February 2026, though timing matters. It arrived at a moment when creators were asking for more than generated motion. They were asking for a model that could take direction in a fuller sense.

That is why its most important difference may be conceptual rather than cosmetic. Seedance 2.0 treats AI video less like a slot machine and more like a guided process. It invites creators to work with text, image, audio, and reference-based direction in a more integrated way. On SeeVideo, that makes the model especially understandable because the platform turns those capabilities into an accessible workflow.

In the end, Seedance 2.0 matters because it reflects a more mature idea of what AI video should be. Not just impressive. Not just fast. More steerable, more layered, and more aligned with how creative decisions are actually made.

Zack Hart

Hey there! I’m Zack Hart, the pun-dedicated brain behind PunsClick.

Based in Alaska, I built this site for everyone who believes a well-placed pun can brighten a dull day.

Whether you’re into clever wordplay or cringe-worthy dad jokes, you’ll find your fix here. We’re all about bringing the world closer — one pun at a time.